如何使用预置评估器

LangSmith 与开源 openevals 包集成,提供一套预置的、即用的评估器,您可以立即使用它们作为评估的起点。

注意

本操作指南将演示如何设置和运行一种类型的评估器(LLM 即法官),但还有许多其他类型的评估器可用。有关完整列表和使用示例,请参阅 openevals 和 agentevals 仓库。

设置

您需要安装 openevals 包才能使用预置的 LLM 即法官评估器。

- Python

- TypeScript

pip install -U openevals

yarn add openevals @langchain/core

您还需要将 OpenAI API 密钥设置为环境变量,尽管您也可以选择不同的提供商

export OPENAI_API_KEY="your_openai_api_key"

我们还将使用 LangSmith 的 pytest(用于 Python)和 Vitest/Jest(用于 TypeScript)集成来运行评估。openevals 也与 evaluate 方法无缝集成。有关设置说明,请参阅 相应指南。

运行评估器

总体流程很简单:从 openevals 导入评估器或工厂函数,然后使用输入、输出和参考输出在您的测试文件中运行它。LangSmith 将自动把评估器的结果记录为反馈。

请注意,并非所有评估器都需要每个参数(例如,精确匹配评估器只需要输出和参考输出)。此外,如果您的 LLM 即法官提示需要额外的变量,将其作为 kwargs 传入将把它们格式化到提示中。

像这样设置您的测试文件

- Python

- TypeScript

import pytest

from langsmith import testing as t

from openevals.llm import create_llm_as_judge

from openevals.prompts import CORRECTNESS_PROMPT

correctness_evaluator = create_llm_as_judge(

prompt=CORRECTNESS_PROMPT,

feedback_key="correctness",

model="openai:o3-mini",

)

# Mock standin for your application

def my_llm_app(inputs: dict) -> str:

return "Doodads have increased in price by 10% in the past year."

@pytest.mark.langsmith

def test_correctness():

inputs = "How much has the price of doodads changed in the past year?"

reference_outputs = "The price of doodads has decreased by 50% in the past year."

outputs = my_llm_app(inputs)

t.log_inputs({"question": inputs})

t.log_outputs({"answer": outputs})

t.log_reference_outputs({"answer": reference_outputs})

correctness_evaluator(

inputs=inputs,

outputs=outputs,

reference_outputs=reference_outputs

)

import * as ls from "langsmith/vitest";

// import * as ls from "langsmith/jest";

import { createLLMAsJudge, CORRECTNESS_PROMPT } from "openevals";

const correctnessEvaluator = createLLMAsJudge({

prompt: CORRECTNESS_PROMPT,

feedbackKey: "correctness",

model: "openai:o3-mini",

});

// Mock standin for your application

const myLLMApp = async (_inputs: Record<string, unknown>) => {

return "Doodads have increased in price by 10% in the past year.";

}

ls.describe("Correctness", () => {

ls.test("incorrect answer", {

inputs: {

question: "How much has the price of doodads changed in the past year?"

},

referenceOutputs: {

answer: "The price of doodads has decreased by 50% in the past year."

}

}, async ({ inputs, referenceOutputs }) => {

const outputs = await myLLMApp(inputs);

ls.logOutputs({ answer: outputs });

await correctnessEvaluator({

inputs,

outputs,

referenceOutputs,

});

});

});

feedback_key/feedbackKey 参数将用作您实验中反馈的名称。

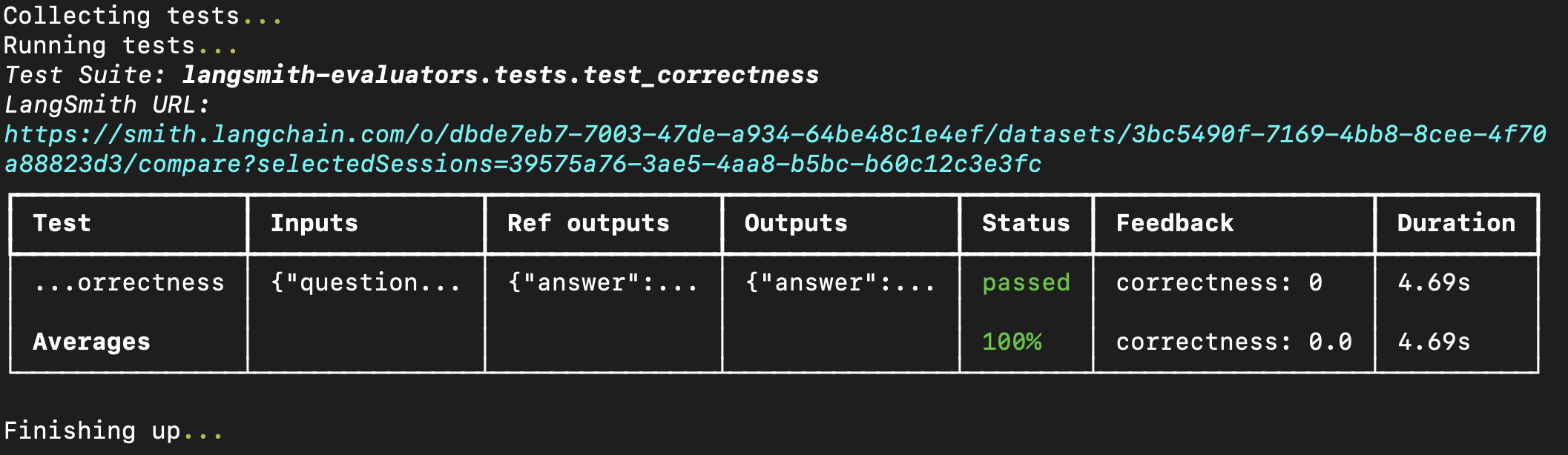

在您的终端中运行评估将得到以下结果

如果您已经在 LangSmith 中创建了数据集,您也可以将预置评估器直接传入 evaluate 方法。如果使用 Python,这需要 langsmith>=0.3.11

- Python

- TypeScript

from langsmith import Client

from openevals.llm import create_llm_as_judge

from openevals.prompts import CONCISENESS_PROMPT

client = Client()

conciseness_evaluator = create_llm_as_judge(

prompt=CONCISENESS_PROMPT,

feedback_key="conciseness",

model="openai:o3-mini",

)

experiment_results = client.evaluate(

# This is a dummy target function, replace with your actual LLM-based system

lambda inputs: "What color is the sky?",

data="Sample dataset",

evaluators=[

conciseness_evaluator

]

)

import { evaluate } from "langsmith/evaluation";

import { createLLMAsJudge, CONCISENESS_PROMPT } from "openevals";

const concisenessEvaluator = createLLMAsJudge({

prompt: CONCISENESS_PROMPT,

feedbackKey: "conciseness",

model: "openai:o3-mini",

});

await evaluate(

(inputs) => "What color is the sky?",

{

data: datasetName,

evaluators: [concisenessEvaluator],

}

);

有关可用评估器的完整列表,请参阅 openevals 和 agentevals 仓库。